Power Monitoring for Energy Efficient 5G/6G with OAI and USRP

Contents

- 1 Application Note Number and Authors

- 2 Authors

- 3 Executive Summary

- 4 Demonstrator Scope and Overview

- 5 Demonstrator System Architecture

- 6 1. 5G/6G Base Station Prototype (gNB)

- 7 2. NI CompactRIO Power Measurement System

- 8 3. NI Data Recording Entity / Server

- 9 4. 5G User Terminal (UE)

- 10 Overall Workflow

- 11 Demonstrator Setup

- 12 1. Device Under Test (DUT): 5G Base Station

- 13 2. NI cRIO Power Measurement System

- 14 3. Data Recording Entity

- 15 5G User Terminal (UE)

- 16 5G Sub‑System Demo Configuration (gNB + UE)

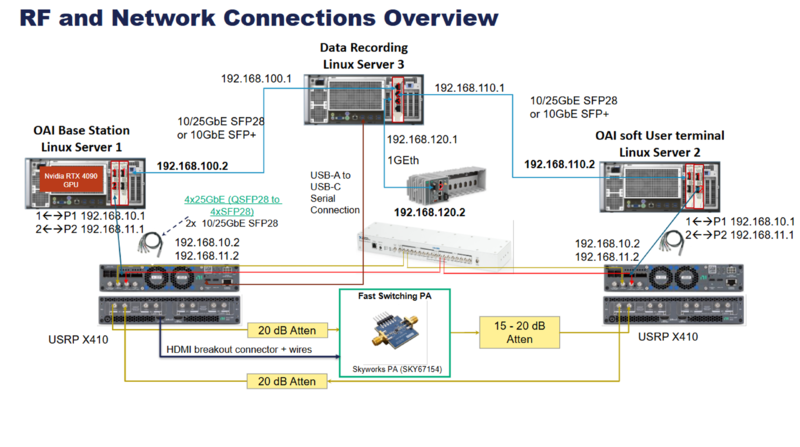

- 17 Hardware System Block Diagram Explanation

- 18 RF and Network Connections Overview

- 19 Network Interfaces

- 20 RF Signal Chain

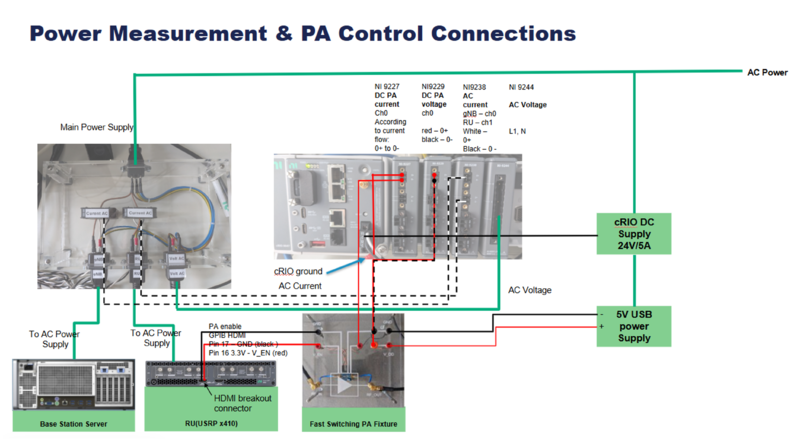

- 21 Power Measurement and PA Control Connections

- 22 Power Measurement & PA Control Connections

- 23 3. Software Installation

- 23.1 3.1 OAI gNB with Neural Rx (Linux Server 1)

- 23.2 3.2 OAI UE (Linux Server 2)

- 23.3 3.3 NI cRIO Software Installation

- 23.4 3.4 Data Recording Server Installation (Linux Server 3)

- 23.5 3.5 Software Installation Summary

- 23.6 3.3 NI cRIO Software Installation

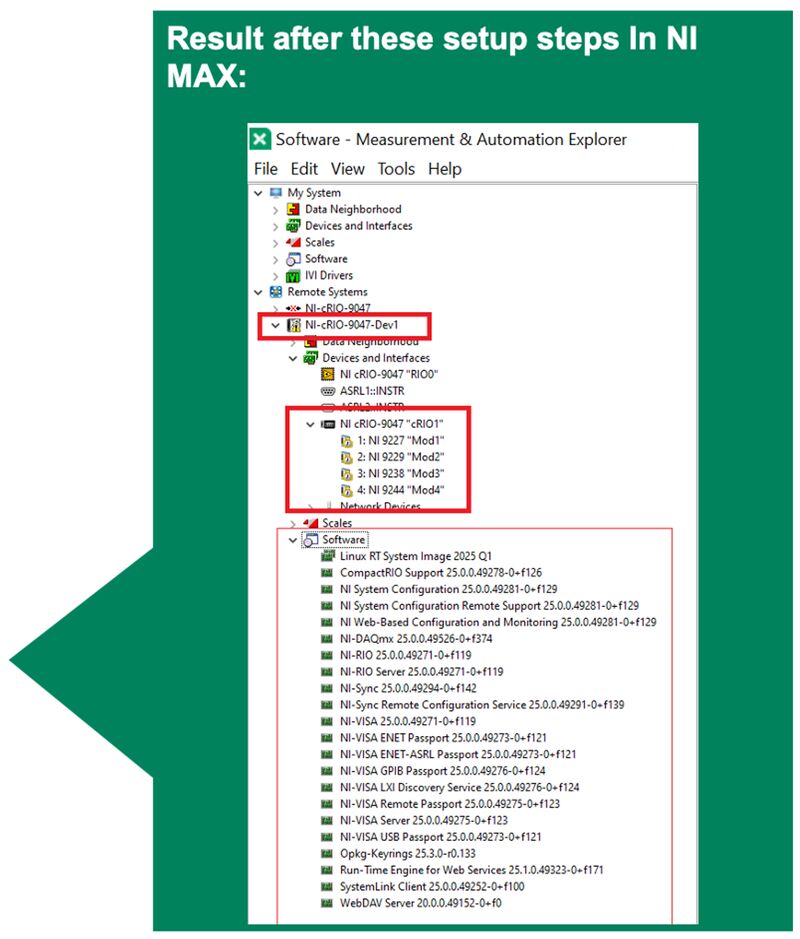

- 23.6.1 3.3.1 cRIO System Preparation

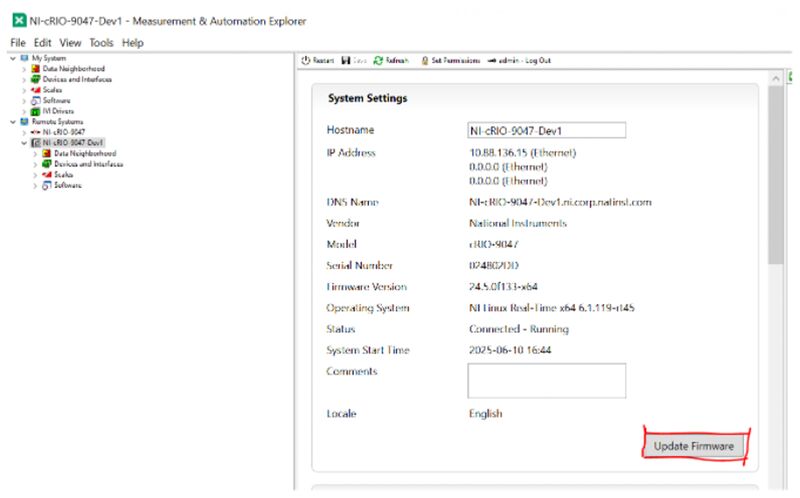

- 23.6.2 3.3.2 Firmware Update

- 23.6.3 3.3.3 Rename C Series Modules in NI MAX

- 23.6.4 3.3.4 Network Adapter and User Settings

- 23.6.5 3.3.5 Prepare cRIO Python Environment

- 23.6.6 3.3.6 Install cRIO Python Software

- 23.6.7 3.3.7 Run the gRPC Server on the cRIO

- 23.6.8 3.3.8 Install gRPC Client on Host PC

- 23.6.9 3.3.9 Verify: Run Example Measurement gRPC Client

Application Note Number and Authors

AN-844

Authors

Bharat Agarwal and Neel Pandeya

Executive Summary

Energy efficiency is becoming a critical KPI for 5G-Advanced and 6G systems, especially in Open RAN, AI-driven PHY, and Integrated Sensing and Communication (ISAC) testbeds.

Real-time power monitoring enables:

- Evaluation of baseband processing efficiency

- Measurement of RF front-end power consumption

- AI accelerator energy profiling

- Optimization of system-level energy-per-bit metrics

This application note demonstrates how to implement accurate power monitoring in a 5G/6G testbed using NI measurement hardware and software tools.

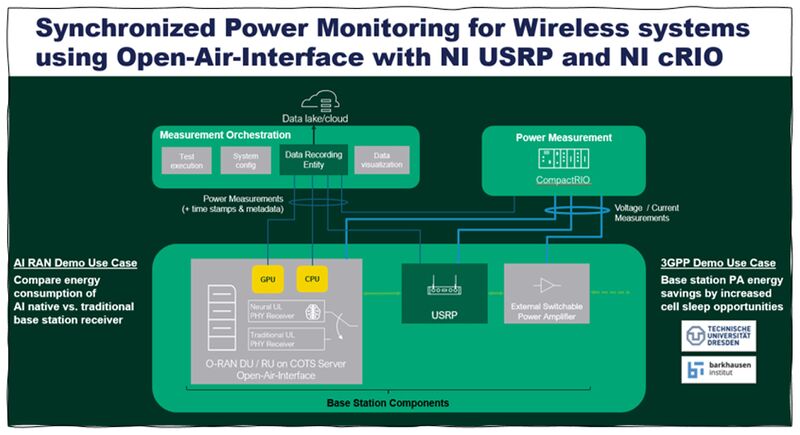

Demonstrator Scope and Overview

This demonstrator implements a measurement framework to synchronously monitor and measure power and energy consumption across multiple heterogeneous components of a wireless system.

Device Under Test (DUT)

5G/6G Base Station Prototype, consisting of:

- Linux server (baseband processing platform)

- OpenAirInterface (OAI) base station stack

- NI USRP (RF front-end)

- External switchable power amplifier (PA)

Demo Use Cases

1. 3GPP-Aligned Demo Use Case

Energy Savings via Enhanced Cell Sleep Mechanisms

- Demonstrates energy reduction at the base station power amplifier.

- Evaluates increased cell sleep opportunities.

- Quantifies real-time power savings during inactive traffic periods.

2. AI-RAN Demo Use Case

Energy Profiling of AI-Native vs. Traditional Receiver

- Measures energy consumption of:

- AI-native base station receiver

- Traditional (e.g., LMMSE-based) receiver

Key Feature of the Measurement Framework

All power and energy measurements are:

- Fully synchronized to a common time grid

- Aligned with the 500 µs slot grid of the 5G NR system

This synchronization enables:

- Slot-level energy analysis

- Accurate correlation between radio activity and power consumption

- Fine-grained energy profiling of PHY processing, RF transmission, and AI inference workloads

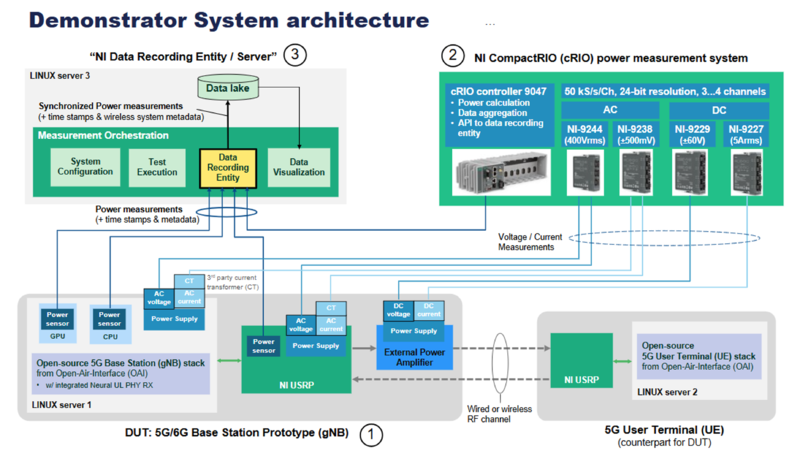

Demonstrator System Architecture

1. 5G/6G Base Station Prototype (gNB)

The DUT includes power sensors (GPU, CPU), a power supply, and an open-source 5G/6G stack from OpenAirInterface. It connects to NI USRP hardware and an external power amplifier. Power channels (AC/DC) are monitored for measurement.

2. NI CompactRIO Power Measurement System

- cRIO Controller 9047 for power calculation and data aggregation

- Measurement modules:

- NI-9244 — AC voltage (400 Vrms)

- NI-9238 — AC current (±500 mV)

- NI-9229 — DC voltage (±60 V)

- NI-9227 — DC current (5 Arms)

- Collects voltage and current measurements from gNB components

3. NI Data Recording Entity / Server

This Linux server handles:

- System configuration

- Test execution

- Data recording

- Data visualization

All measurement data is stored in a data lake with timestamps and metadata.

4. 5G User Terminal (UE)

The UE runs an open-source 5G stack from OpenAirInterface and communicates with the gNB via NI USRP hardware over a wired or wireless RF channel.

Overall Workflow

The gNB and UE communicate over RF. The CompactRIO system measures power data from the gNB and sends it to the centralized data server for recording and analysis.

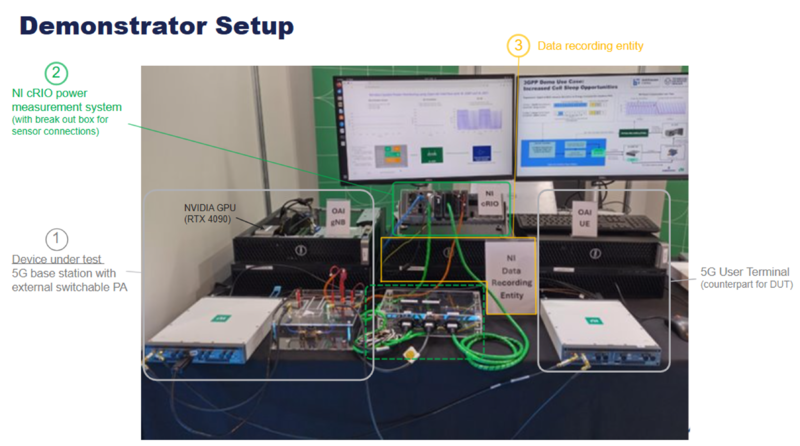

Demonstrator Setup

1. Device Under Test (DUT): 5G Base Station

The left side of the setup contains the 5G base station prototype with an external switchable power amplifier. A computing platform equipped with an NVIDIA RTX 4090 GPU runs the 5G gNB software stack. Multiple cables connect the DUT to measurement equipment and RF interfaces.

2. NI cRIO Power Measurement System

The central upper part of the setup features the NI cRIO system with an attached breakout box for sensor connections. This equipment measures voltage, current, and power consumption from the DUT and forwards the data to the recording server.

3. Data Recording Entity

The central lower section includes the NI Data Recording Entity, responsible for data orchestration, logging, aggregation, and visualization. A monitor above shows dashboards and measurement results.

5G User Terminal (UE)

On the right side is the 5G User Terminal (UE), which acts as the RF counterpart to the DUT. It connects through RF interfaces for 5G communication testing.

5G Sub‑System Demo Configuration (gNB + UE)

1. Wireless Scenario

- Single link between one gNB and one UE

- Wired RF connection

- No interference from:

- Neighboring cells

- Other UEs

2. Radio Configuration

Operating Band

- NR Band: n78

Waveform & Numerology

- Subcarrier Spacing (SCS): 30 kHz

- Waveform: CP‑OFDM

- Channel Bandwidth: 40 MHz

- Maximum PRBs: 106

3. Downlink (DL) Scheduling

- Scheduled DL PRBs:

- 20 PRBs

- 80 PRBs

- Switch interval: Every 10 frames

4. Uplink (UL) Scheduling

- Scheduled UL PRBs: 6 (kept low to ensure gNB Neural Rx real‑time performance)

- UL transmission bandwidth: ~2.16 MHz

5. TDD Configuration

DL/UL periodicity: 5 ms

TDD Slot & Symbol Structure

| Parameter | Value |

|---|---|

| nrofDownlinkSlots | 7 |

| nrofDownlinkSymbols | 6 |

| nrofUplinkSlots | 2 |

| nrofUplinkSymbols | 4 |

6. PUSCH Configuration

- Mapping type: B

- PUSCH duration: 13 OFDM symbols

- DMRS configuration:

- Type 1

dmrs-AdditionalPosition = pos2- DMRS positions: Symbols 0, 5, 11

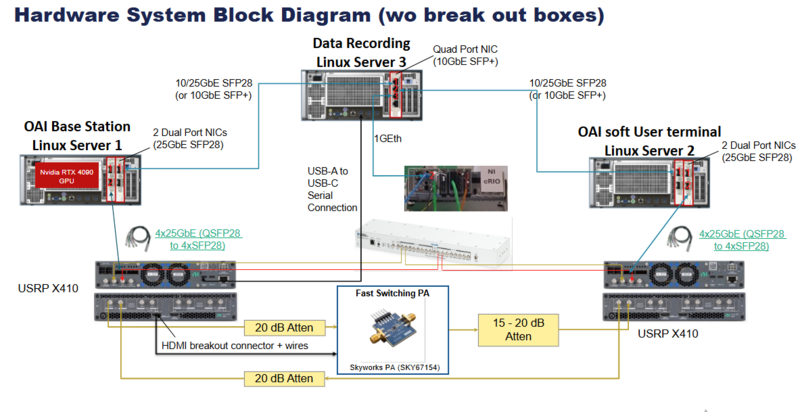

Hardware System Block Diagram Explanation

1. OAI Base Station (gNB) – Linux Server 1

This server runs the OpenAirInterface gNB software stack. It includes an NVIDIA RTX 4000 GPU and connects to a USRP X410 via high-bandwidth 4×25 GbE (QSFP28 to 4×SFP28). RF paths pass through 20 dB attenuators and HDMI breakout connectors.

2. OAI Soft User Terminal (UE) – Linux Server 2

This subsystem runs the OAI software UE. It connects to another USRP X410 using dual-port 25 GbE NICs and the same 4×25 GbE optical links.

3. USRP X410 Radios

Two USRP X410 SDR units are used—one for the gNB and one for the UE. They interface via optical fiber and RF lines routed through attenuation and power amplification modules.

4. Data Recording – Linux Server 3

This server includes quad-port 10 GbE NICs and 10/25 GbE SFP28 links for capturing IQ, logs, and network traffic. USB-A to USB-C serial connections provide PA control. It exchanges control and logging data via 1GE Ethernet.

5. Fast Switching Power Amplifier

A Skyworks SKY67154 fast-switching PA is shown between RF attenuation blocks. It provides controlled RF gain for more realistic testing scenarios.

6. RF Attenuators and Breakout Hardware

The RF path includes multiple fixed 20 dB attenuators and adjustable 15–20 dB attenuators. HDMI breakout connectors are used for additional RF signal routing and measurement.

7. High-Speed NICs and Optical Links

The system uses QSFP28 to 4×SFP28 breakout cables, 10/25 GbE NICs, and quad-port NICs to transport high-rate IQ and control messages between servers and USRPs.

Power Monitoring Demonstrator – Materials Summary

The Power Monitoring Demonstrator integrates high‑performance servers, SDR radios, RF components, and NI measurement hardware to evaluate and monitor the power consumed during wireless 5G/6G operation. Servers provide compute and networking, USRPs generate and receive RF signals, and the cRIO system captures accurate AC/DC measurements. RF attenuators, cables, and accessories ensure safe and controlled signal flow during operation.

| Equipment | Type | Quantity |

|---|---|---|

| Server | Dell Precision 5860 Tower | 3 |

| Network Card (10GbE SFP+) | Intel X710 Quad Port 10GbE SFP+ Adapter | 1 |

| Eth Cable (SFP+) | 10G Eth Cable SFP+ 2m | 2 |

| SFP-to-RJ45 Adapter | SFP 1 Gigabit Ethernet Interface Kit / FCLF8522P2BTL | 1 |

| Network Card (25GbE SFP28) | Mellanox NIC 2×1/10/25GbE SFP28 (MCX512A-ACAT) | 4 |

| QSFP28 → 4×SFP28 Cable |

NVIDIA MCP7F00-A003R26N 100GbE to 4×25GbE, 3m or NI QSFP28→4×SFP28 Breakout (PN 788214-01) |

2 |

| NVIDIA GPU | NVIDIA GeForce RTX 4090 Founders Edition | 1 |

| Extra GPU Power Cable | COMeap 10‑pin to 8‑pin PCIe Cable (not required for Dell 5860) | 1 |

| NI USRP | USRP X410 | 2 |

| External Clock + PPS Source | OctoClock‑G CDA‑2990 | 1 |

| cRIO Power Measurement System | cRIO 9047 + NI 9238, NI‑9244, NI‑9229, NI‑9227 modules | 1 |

| RF Cables | SMA(M)‑to‑SMA(M) RF Cables (1m) | 7 |

| 20 dB RF Attenuators | SMA‑F to SMA‑M Attenuators | 2 |

| 10 dB RF Attenuators | SMA‑F to SMA‑M Attenuators | 2 |

| Switchable Power Amplifier | Skyworks PA SKY67154 | 1 |

| AC Current Clamps | Magnelab SCT‑0750‑020 | 2 |

| HDMI Breakout Connector | 19‑pin HDMI Terminal Block Adapter | 1 |

| USB‑C to USB‑A Cable | Standard | 1 |

| Network Switch (Optional) | 4+ Ethernet Ports (for demo / remote access) | 1 |

| 1G Ethernet Cables | Standard | 4 |

RF and Network Connections Overview

System Overview

This testbed implements a complete 5G NR gNB ↔ UE link in a wired back-to-back configuration using Open Air Interface (OAI) software. Three Linux servers handle baseband processing, user terminal emulation, and data recording respectively. Two USRP X410 units serve as the RF front-ends, connected through attenuators and a fast-switching power amplifier.

Hardware Components

Linux Server 1 - OAI Base Station

- Runs the OAI gNB 5G NR stack

- Equipped with Nvidia RTX 4090 GPU for real-time baseband acceleration (LDPC, FFT, channel estimation)

- Connected to USRP X410 via 4×25GbE QSFP28 → 4×SFP28 breakout cable

- Controls the Fast Switching PA via HDMI breakout connector + GPIO wires

Linux Server 2 - OAI Soft User Terminal (UE)

- Runs the OAI UE software stack (soft user terminal)

- Mirror configuration to Server 1

- Connected to USRP X410 via 4×25GbE QSFP28 → 4×SFP28 breakout cable

Linux Server 3 — Data Recording

- Central logging, packet capture, and experiment orchestration server

- Connects to both Server 1 and Server 2 via 10/25GbE SFP28 or 10GbE SFP+

- Connects to auxiliary small chassis via 1GbE and USB-A → USB-C serial

USRP X410 (×2)

- NI/Ettus Software Defined Radio units acting as the RF front-end

- One unit per server (gNB side and UE side)

- I/Q samples streamed over high-speed SFP28 links

Fast Switching PA — Skyworks SKY67154

- Wideband GaAs power amplifier on a custom breakout PCB

- GPIO-controlled via HDMI breakout from Server 1

- Enables TDD-synchronized TX/RX switching aligned to 5G NR slot timing

Attenuators

| Position | Value | Purpose |

|---|---|---|

| gNB TX output | 20 dB | Prevent USRP X410 TX overdrive |

| PA output (DL) | 15–20 dB | Protect UE-side USRP RX input |

| UE TX output (UL) | 20 dB | Protect gNB-side USRP RX input |

NI cRIO (CompactRIO)

Connected to Server 3 via 1GbE (192.168.120.2) and USB-C serial console. The NI cRIO acts as a real-time embedded controller used for low-latency hardware control, timing synchronization, and auxiliary I/O management within the testbed.

Network Interfaces

Server 1 ↔ Server 3 (Management / Data)

| Parameter | Value |

|---|---|

| Server 1 IP | 192.168.100.2

|

| Server 3 IP | 192.168.100.1

|

| Link Type | 10/25GbE SFP28 or 10GbE SFP+ |

Server 2 ↔ Server 3 (Management / Data)

| Parameter | Value |

|---|---|

| Server 2 IP | 192.168.110.2

|

| Server 3 IP | 192.168.110.1

|

| Link Type | 10/25GbE SFP28 or 10GbE SFP+ |

Server 1 ↔ USRP X410 (Fronthaul)

| Parameter | Value |

|---|---|

| Server 1 IPs | 192.168.10.2 / 192.168.11.2

|

| USRP X410 IPs | 192.168.10.1 / 192.168.11.1

|

| Link Type | 4×25GbE QSFP28 → 4×SFP28 |

Server 2 ↔ USRP X410 (Fronthaul)

| Parameter | Value |

|---|---|

| Server 2 IPs | 192.168.10.2 / 192.168.11.2

|

| USRP X410 IPs | 192.168.10.1 / 192.168.11.1

|

| Link Type | 4×25GbE QSFP28 → 4×SFP28 |

Server 3 ↔ NI cRIO

| Parameter | Value |

|---|---|

| Server 3 IP | 192.168.120.1

|

| NI cRIO IP | 192.168.120.2

|

| Link Type | 1GbE + USB-A → USB-C Serial |

RF Signal Chain

Downlink (gNB TX → UE RX)

USRP X410 (Server 1)

│

▼

20 dB Attenuator

│

▼

Fast Switching PA (Skyworks SKY67154)

│

▼

15–20 dB Attenuator

│

▼

USRP X410 (Server 2)

Uplink (UE TX → gNB RX)

USRP X410 (Server 2)

│

▼

20 dB Attenuator

│

▼

USRP X410 (Server 1)

Note: The Fast Switching PA is only in the downlink path. The HDMI breakout connector carries GPIO control signals from Server 1 to enable time-synchronized PA switching per 5G TDD frame structure.

IP Address Reference

| Device | Interface | IP Address |

|---|---|---|

| Server 1 | To Server 3 | 192.168.100.2

|

| Server 1 | To USRP P1 | 192.168.10.2

|

| Server 1 | To USRP P2 | 192.168.11.2

|

| Server 2 | To Server 3 | 192.168.110.2

|

| Server 2 | To USRP P1 | 192.168.10.2

|

| Server 2 | To USRP P2 | 192.168.11.2

|

| Server 3 | To Server 1 | 192.168.100.1

|

| Server 3 | To Server 2 | 192.168.110.1

|

| Server 3 | To NI cRIO | 192.168.120.1

|

| USRP X410 (gNB) | P1 | 192.168.10.1

|

| USRP X410 (gNB) | P2 | 192.168.11.1

|

| USRP X410 (UE) | P1 | 192.168.10.1

|

| USRP X410 (UE) | P2 | 192.168.11.1

|

| NI cRIO | To Server 3 | 192.168.120.2

|

Power Measurement and PA Control Connections

Power Measurement & PA Control Connections

This section describes the power measurement setup and PA control wiring used in the 5G NR testbed, including DC/AC measurement modules installed in the NI cRIO chassis and the PA enable signal from the USRP X410.

System Components

| Component | Description |

|---|---|

| Base Station Server | Main compute server running the OAI gNB stack |

| RU (USRP X410) | Radio Unit providing RF front-end and PA enable GPIO via HDMI breakout |

| Fast Switching PA Fixture | Skyworks SKY67154 PA board receiving V_EN control signal and DC supply |

| NI cRIO Chassis | CompactRIO hosting measurement modules for DC and AC power monitoring |

| Main Power Supply | AC mains supply powering the gNB and RU equipment |

| cRIO DC Supply | 24V / 5A dedicated DC supply for the NI cRIO chassis |

| 5V USB Power Supply | 5V supply for the Fast Switching PA Fixture (V_DD) |

NI cRIO Measurement Modules

| Module | Type | Channel | Wiring |

|---|---|---|---|

| NI 9227 | DC PA Current | Ch0 | According to current flow: 0+ to 0− |

| NI 9229 | DC PA Voltage | Ch0 | red − 0+ / black − 0− |

| NI 9238 | AC Current | gNB − ch0 / RU − ch1 | White − 0+ / Black − 0− |

| NI 9244 | AC Voltage | L1, N | AC Voltage measurement |

PA Enable Control (HDMI Breakout)

The USRP X410 (RU) controls the Fast Switching PA via the HDMI breakout connector. The GPIO pins carry the PA enable signal synchronized to the 5G TDD frame timing.

| HDMI Pin | Signal | Wire Color | Description |

|---|---|---|---|

| Pin 17 | GND | Black | Ground reference |

| Pin 16 | V_EN | Red | 3.3V PA enable signal |

Note: The signal is labeled GPIB HDMI PA enable in the diagram. The V_EN line switches the PA on/off in sync with gNB TX/RX slot timing.

Power Supply Connections

| Supply | Voltage / Current | Powers | Connection |

|---|---|---|---|

| Main Power Supply | AC Mains | Base Station Server + RU (USRP X410) | To AC Power Supply (×2 outputs) |

| cRIO DC Supply | 24V / 5A | NI cRIO Chassis | Direct DC wiring to cRIO |

| 5V USB Power Supply | 5V | Fast Switching PA Fixture (V_DD) | Red (+) / Black (−) wiring to PA board |

AC Power & Measurement Wiring

AC power is distributed from the Main Power Supply via a green wire bus to:

- The NI cRIO chassis (via NI 9244 AC Voltage module — L1, N)

- The NI 9238 AC Current module measuring current drawn by gNB (ch0) and RU (ch1)

The cRIO ground and AC Current sense lines are connected at the cRIO chassis as indicated by the blue arrow in the diagram.

Signal Flow Summary

AC Mains

│

├──► Main Power Supply ──► Base Station Server

│ └──► RU (USRP X410)

│

├──► NI 9244 (AC Voltage: L1, N)

├──► NI 9238 (AC Current: gNB ch0, RU ch1)

│

└──► cRIO DC Supply (24V/5A) ──► NI cRIO Chassis

RU (USRP X410)

│

└──► HDMI Breakout (Pin16: V_EN 3.3V, Pin17: GND)

│

└──► Fast Switching PA Fixture (PA Enable)

5V USB Power Supply

│

└──► Fast Switching PA Fixture (V_DD)

NI cRIO

├──► NI 9227: DC PA Current (Ch0)

├──► NI 9229: DC PA Voltage (Ch0)

├──► NI 9238: AC Current (gNB ch0 / RU ch1)

└──► NI 9244: AC Voltage (L1, N)

3. Software Installation

This section covers the software installation for all four entities in the testbed. For OAI gNB with Neural Rx and OAI UE, the installation follows the existing Application Note AN-829. The sections below provide direct references to the relevant steps in that document, along with the additional installation procedures for the NI cRIO and the Data Recording Server.

| # | Entity | Host | Reference |

|---|---|---|---|

| 1 | OAI gNB with Neural Rx | Linux Server 1 | AN-829 — Ettus KB |

| 2 | OAI UE | Linux Server 2 | AN-829 — Ettus KB |

| 3 | NI cRIO | CompactRIO Chassis | See Section 3.3 below |

| 4 | Data Recording Server | Linux Server 3 | See Section 3.4 below |

3.1 OAI gNB with Neural Rx (Linux Server 1)

The OAI gNB software installation on Server 1 follows the procedure described in the existing Ettus Knowledge Base Application Note AN-829: 5G OAI Neural Receiver Testbed with USRP X410.

The relevant sections to follow are listed below in order.

| Step | Section in AN-829 | Description |

|---|---|---|

| 1 | Power Management and BIOS Settings | Disable C-states, Hyper-Threading; enable SpeedStep and Turbo Boost |

| 2 | High-Speed Ethernet Configuration | Set MTU=9000, socket buffers, ring buffers for SFP28 interfaces |

| 3 | NVIDIA CUDA Drivers | Install Nvidia driver 535 + CUDA 12.2 on Ubuntu 22.04 |

| 4 | TensorFlow 2.14 + TensorRT + TF C-API | Install TF 2.14 in Python venv, install TF C-API to /usr/local/lib

|

| 5 | UHD 4.8.0.0 | Build and install UHD from source; download USRP images |

| 6 | OAI gNB Source Code | Clone NI repository branch; comment out UHD re-install in build script |

| 7 | Neural Receiver Library | Build libtest_inference.a and call_test_inference.o

|

| 8 | gNB and USRP Configuration | Set USRP IP addresses and clock source in .conf file

|

| 9 | FlexRIC | Build FlexRIC with SWIG 4.1 and GCC 10/12 |

| 10 | xApp gRPC Service | Build xAppTechDemo library and gRPC server interface |

Note: Server 1 requires the Nvidia RTX 4090 GPU. Ensure the GPU is installed before beginning the CUDA driver installation step.

3.2 OAI UE (Linux Server 2)

The OAI Soft UE software installation on Server 2 also follows AN-829. Server 2 does not require GPU acceleration or the Neural Receiver library.

The relevant sections to follow are listed below in order.

| Step | Section in AN-829 | Description |

|---|---|---|

| 1 | Power Management and BIOS Settings | Same BIOS optimizations as Server 1 |

| 2 | High-Speed Ethernet Configuration | MTU=9000 and socket buffer tuning for SFP28 interfaces |

| 3 | UHD 4.8.0.0 | Build and install UHD from source (same as gNB side) |

| 4 | OAI UE Source Code | Clone NI UE repository; comment out UHD re-install in build script |

| 5 | Build OAI Soft UE | Build with --nrUE -w USRP flags

|

| 6 | OAI UE Configuration | Set IMSI, key, OPC, DNN in ue.conf

|

Note: Server 2 does not require TensorFlow, CUDA, or the Neural Receiver library. A lower-performance GPU (e.g., RTX 4060) may be installed but is not required for UE operation.

3.3 NI cRIO Software Installation

The NI CompactRIO (cRIO) is used for real-time power measurement and PA control. It runs a LabVIEW Real-Time application deployed from NI MAX or the LabVIEW IDE.

3.3.1 Prerequisites

| Software | Version | Notes |

|---|---|---|

| NI-RIO Driver | 2023 Q4 or later | Required for cRIO communication |

| LabVIEW Real-Time Module | 2023 or later | Deployed to cRIO chassis |

| NI-DAQmx | 2023 or later | Required for NI 9227, 9229, 9238, 9244 modules |

| NI MAX (Measurement & Automation Explorer) | Latest | Used to configure IP and deploy RT application |

3.3.2 Network Configuration

Configure the cRIO IP address using NI MAX:

- Connect the cRIO to Server 3 via 1GbE cable.

- Open NI MAX on Server 3.

- Navigate to Remote Systems → locate the cRIO device.

- Set the IP address to

192.168.120.2, subnet255.255.255.0. - Set the gateway to

192.168.120.1(Server 3). - Apply and reboot the cRIO.

Verify connectivity from Server 3:

ping 192.168.120.2

3.3.3 Module Slot Assignment

Verify that the following NI C Series modules are installed in the correct chassis slots and recognized in NI MAX:

| Slot | Module | Function |

|---|---|---|

| Slot 1 | NI 9227 | DC PA Current measurement (Ch0) |

| Slot 2 | NI 9229 | DC PA Voltage measurement (Ch0) |

| Slot 3 | NI 9238 | AC Current measurement (gNB ch0, RU ch1) |

| Slot 4 | NI 9244 | AC Voltage measurement (L1, N) |

3.3.4 LabVIEW RT Application Deployment

- Open the cRIO LabVIEW RT project in LabVIEW on Server 3.

- Right-click the cRIO target → Connect.

- Right-click the RT application VI → Deploy.

- Set the application to Run at Startup for autonomous operation.

- Verify the deployment via NI MAX → Software tab on the cRIO target.

3.3.5 Serial Console Verification

Verify the USB serial connection between Server 3 and the cRIO:

ls /dev/ttyUSB*

The cRIO serial console should appear as /dev/ttyUSB0 or similar.

Connect using:

sudo screen /dev/ttyUSB0 115200

3.4 Data Recording Server Installation (Linux Server 3)

Server 3 acts as the central data recording and experiment orchestration node. It requires network configuration, data capture tools, and connection management for both the compute servers and the NI cRIO.

3.4.1 Operating System

| Parameter | Value |

|---|---|

| OS | Ubuntu 22.04.5 LTS |

| Kernel | 6.5.0-44-generic or later |

| Installation type | Bare-metal (no VM) |

3.4.2 Network Interface Configuration

Configure all interfaces with static IP addresses. Edit the Netplan configuration file:

sudo nano /etc/netplan/01-netcfg.yaml

Example configuration:

network:

version: 2

ethernets:

enp<server1_iface>:

addresses: [192.168.100.1/24]

enp<server2_iface>:

addresses: [192.168.110.1/24]

enp<crio_iface>:

addresses: [192.168.120.1/24]

Apply the configuration:

sudo netplan apply

Verify connectivity to all nodes:

ping 192.168.100.2 # Server 1 ping 192.168.110.2 # Server 2 ping 192.168.120.2 # NI cRIO

3.4.3 Required Software Packages

Install the following packages:

sudo apt update

sudo apt install -y python3 python3-pip wireshark tcpdump \

net-tools openssh-server screen git

Install Python dependencies for data recording scripts:

pip3 install numpy pandas matplotlib scipy

3.4.4 Packet Capture Setup

Verify that Wireshark or tcpdump can capture on the relevant interfaces:

sudo tcpdump -i enp<server1_iface> -w /data/capture_gnb.pcap

Ensure the /data directory has sufficient storage for experiment recordings.

3.4.5 SSH Access Configuration

Enable passwordless SSH from Server 3 to Server 1 and Server 2 for automated experiment orchestration:

ssh-keygen -t rsa -b 4096 ssh-copy-id [email protected] # Server 1 ssh-copy-id [email protected] # Server 2

3.5 Software Installation Summary

| Entity | Server | OS | Key SW Components | GPU Required |

|---|---|---|---|---|

| OAI gNB + Neural Rx | Server 1 | Ubuntu 22.04.5 | UHD 4.8, CUDA 12.2, TF 2.14, OAI gNB, FlexRIC, xApp | Yes — RTX 4090 |

| OAI UE | Server 2 | Ubuntu 22.04.5 | UHD 4.8, OAI nrUE | Optional |

| NI cRIO | cRIO Chassis | NI Linux Real-Time | NI-RIO, NI-DAQmx, LabVIEW RT App | No |

| Data Recording | Server 3 | Ubuntu 22.04.5 | Python 3, Wireshark, tcpdump, SSH | No |

3.3 NI cRIO Software Installation

The NI CompactRIO (cRIO-9047) supports two installation methods for the Python-based gRPC server software: an automated deployment script (recommended) and a manual installation procedure. Both methods are described below.

For more details on general cRIO hardware setup, refer to the Getting Started with CompactRIO Hardware and LabVIEW guide.

3.3.1 cRIO System Preparation

- Download and install NI CompactRIO driver version 2025 Q1 from the NI CompactRIO Download page.

- Download and install Base Image 2025 Q1 from the NI Linux RT System Image Download page.

- In NI MAX, do step 4 up to Base Image installation — select Linux RT System Image 2025 Q1 (Version 25.0.0).

- After the automatic cRIO reboot, you will be asked to select a programming environment. Choose Other (…, Python, etc.).

- Keep the default software selections and click Continue.

Note: If you encounter issues setting the password, finalize the software installation first and set the password afterwards.

After completing these steps, NI MAX should show the cRIO target with the following software installed under the Software tab:

| Software Component | Version |

|---|---|

| Linux RT System Image 2025 Q1 | 25.0.0 |

| CompactRIO Support | 25.0.0 |

| NI System Configuration | 25.0.0 |

| NI-DAQmx | 25.0.0 |

| NI-RIO Server | 25.0.0 |

| NI-VISA | 25.0.0 |

| NI Sync | 25.0.0 |

| SystemLink Client | 25.0.0 |

And the following C Series modules should appear under the cRIO chassis:

| Slot | Module | Assigned Name |

|---|---|---|

| 1 | NI 9227 | NI-9227 |

| 2 | NI 9229 | NI-9229 |

| 3 | NI 9238 | NI-9238 |

| 4 | NI 9244 | NI-9244 |

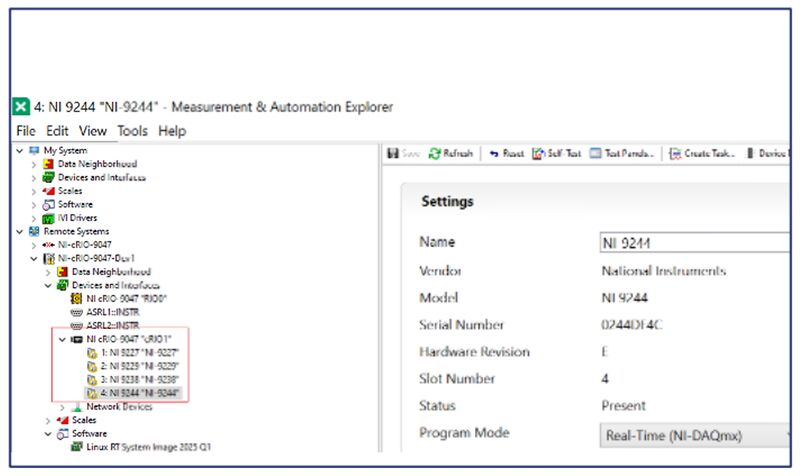

3.3.2 Firmware Update

If a firmware update is required, update to version 24.5.0f133-x64.

The firmware file is located at:

Firmware File Location

To update via NI MAX:

- Select the cRIO target in the left panel (e.g.,

NI-cRIO-9047-Dev1). - In the System Settings panel on the right, verify the current Firmware Version.

- Click Update Firmware and follow the on-screen instructions.

- Verify the Operating System shows:

NI Linux Real-Time x64 6.1.119-rt45 - Verify Status shows:

Connected - Running

3.3.3 Rename C Series Modules in NI MAX

The module names are used by the Python RT implementation to identify modules based on a JSON configuration file. Rename each module in NI MAX as follows:

- In NI MAX, expand the cRIO chassis node to show the installed modules.

- Right-click each module → Rename.

- Set the names exactly as shown in the table below.

| Slot | Module | Required Name |

|---|---|---|

| 1 | NI 9227 | NI-9227 |

| 2 | NI 9229 | NI-9229 |

| 3 | NI 9238 | NI-9238 |

| 4 | NI 9244 | NI-9244 |

To verify the module settings, click on each module in NI MAX and confirm:

- Program Mode: Real-Time (NI-DAQmx)

- Status: Present

3.3.4 Network Adapter and User Settings

Network Configuration

To change IP settings in NI MAX:

- Select the cRIO target → Network Settings tab.

- Under Ethernet Adapter eth0 (Primary), configure:

| Parameter | Value |

|---|---|

| Adapter Mode | TCP/IP Network |

| Configure IPv4 Address | Static (or DHCP or Link Local) |

| IPv4 Address | 192.168.120.2

|

| Subnet Mask | 255.255.255.0

|

| Gateway | 192.168.120.1

|

| DNS Server | 172.22.86.10 (or as required)

|

Note: eth1 and usb0 adapters can remain at default 0.0.0.0 settings

unless additional interfaces are needed.

User / Password Settings

To set the administrator password, click Set Permissions in NI MAX toolbar:

| Field | Value |

|---|---|

| Old password | <keep empty> |

| New password | admin

|

| Confirm password | admin

|

Click OK and reboot the cRIO to apply.

3.3.5 Prepare cRIO Python Environment

Before installing the Python software, prepare the directory structure and locale settings on the cRIO.

Get the Software Packages

Obtain the following Python wheel files from the repository:

| Package | Source |

|---|---|

crio_grpc_shared |

Internal repo or external .whl file

|

crio_grpc_server |

Internal repo or external .whl file

|

crio_grpc_client |

Internal repo (host PC only) |

Dependencies are managed via poetry for consistent version management.

For more details refer to: support_lti_6g_sw/power_monitoring/crio/python/README.md — "General" section.

Create Directory Structure on cRIO

SSH into the cRIO and create the required directories:

ssh admin@<ip address of crio> mkdir crio_python mkdir crio_python/installers exit

Fix Locale Settings

Incorrect locale settings can cause DAQmx errors. Fix this as follows.

Optionally install nano on the cRIO:

opkg update opkg install nano

Edit the bashrc file:

nano ~/.bashrc

Add the following two lines at the bottom:

export LC_ALL=en_US.UTF-8 export LANG=en_US.UTF-8

Save with Ctrl+S, exit with Ctrl+X, then restart the shell or reboot the cRIO.

Note: VS Code can be used to connect via SSH for file editing and copying. However, VS Code versions above 1.98.2 may have issues with SSH connections to the cRIO. See the Remote Development FAQ for details.

3.3.6 Install cRIO Python Software

Two installation methods are available: automated script (recommended) or manual.

Method A: Automated Deployment Script (Recommended)

Open the deployment script on the Host PC:

support_lti_6g_sw/power_monitoring/crio/python/deploy_crio_server.sh

Edit the configuration section at the top of the script to match your setup:

# Configuration

CRIO_HOST="192.168.120.2" # Update with your cRIO's IP address or hostname

CRIO_USER="admin"

CRIO_INSTALL_DIR="/home/admin/crio_python/installers"

CRIO_VENV="/home/admin/crio_python/.venv"

# Directories

BASE_DIR="/home/user/workarea/oai/lti-6g-sw_oai-5g-ran/support_lti_6g_sw/power_monitoring/crio/python"

SHARED_DIR="${BASE_DIR}/crio_grpc_shared"

SERVER_DIR="${BASE_DIR}/crio_grpc_server"

Run the script:

./deploy_crio_server.sh

The script will automatically:

- Build the Python packages

- Connect to the cRIO via SSH

- Install all required packages into the virtual environment

Method B: Manual Installation

Step 1: Copy the Python wheel files from the Host PC to the cRIO via SCP:

scp -r <path>\crio_grpc_shared-0.1.1-py3-none-any.whl admin@<IP-address>:~/crio_python/installers scp -r <path>\crio_grpc_server-0.1.1-py3-none-any.whl admin@<IP-address>:~/crio_python/installers

Step 2: SSH into the cRIO:

ssh admin@<ip address of crio>

Step 3: Install dependencies and Python packages:

opkg install python3-pip python3 -m venv ~/crio_python/.venv cd crio_python/ source .venv/bin/activate python3 -m pip install installers/crio_grpc_shared-0.1.1-py3-none-any.whl python3 -m pip install installers/crio_grpc_server-0.1.1-py3-none-any.whl

3.3.7 Run the gRPC Server on the cRIO

Two options are available to start the gRPC server on the cRIO.

Option 1: Run Manually from Virtual Environment

ssh admin@<ip address of crio> cd /home/admin/crio_python source .venv/bin/activate python3 .venv/lib/python3.12/site-packages/crio_grpc_server/energy_efficiency_grpc_server.py --device_type CRIO --log_level INFO

Option 2: Startup Script

Copy the startup script to the cRIO:

scp -r <path>\startup_crio.sh admin@<IP-address>:~/

The startup script content is as follows:

#!/bin/bash cd /home/admin/crio_python source .venv/bin/activate exec python3 .venv/lib/python3.12/site-packages/crio_grpc_server/energy_efficiency_grpc_server.py --device_type CRIO --log_level INFO

Run the startup script:

cd /home/admin/ ./startup_crio.sh

A successful startup will show the following log output:

INFO - Initializing CRIO gRPC server... INFO - Establishing connection to CRIO device... INFO - Initializing CRIO gRPC server... INFO - Configuring... INFO - Finished processing state IDLE exit callbacks. INFO - Finished processing state IDLE enter callbacks. INFO - Configuring... INFO - Executed callback '__reset_and_configure_measurement__' INFO - Server started, listening on 2032

3.3.8 Install gRPC Client on Host PC

The gRPC client runs on the Host PC (Server 3) and communicates with the cRIO gRPC server.

Prerequisites: Python 3.11 or later (verified with 3.12).

Step 1: Prepare the Python virtual environment on the Host PC:

python -m venv <path>\crio\.venv cd <path>\crio\.venv .\.venv\Scripts\activate python -m pip install .\crio_grpc_shared-0.1.1-py3-none-any.whl python -m pip install .\crio_grpc_client-0.1.1-py3-none-any.whl

Step 2: Copy the installed example scripts to your project folder:

.venv\Lib\site-packages\crio_grpc_client\energy_efficiency_grpc_client_basicExample.py .venv\Lib\site-packages\crio_grpc_client\energy_efficiency_grpc_client_basicVisualizationExample.ipynb

Step 3: Set the cRIO IP address in the example scripts:

grpc_target = "192.168.120.2:2032"

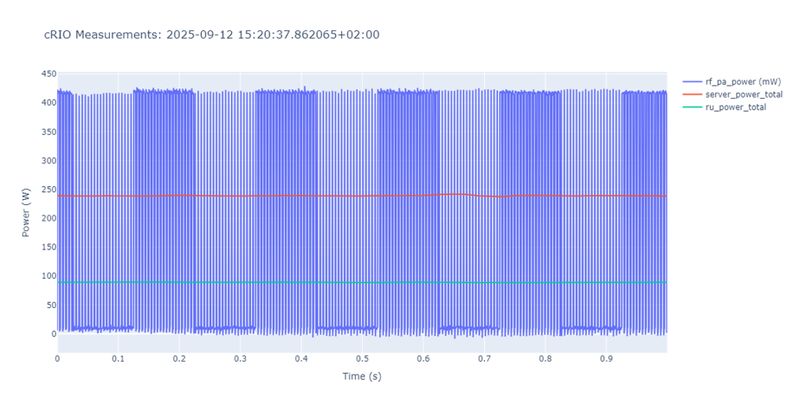

3.3.9 Verify: Run Example Measurement gRPC Client

With the gRPC server running on the cRIO, run the Jupyter notebook example on the Host PC:

energy_efficiency_grpc_client_basicVisualizationExample.ipynb

This can be opened using:

- Visual Studio Code with the Python virtual environment activated

- Jupyter web server

A successful run will produce a real-time power measurement plot showing:

| Trace | Color | Measured Entity |

|---|---|---|

rf_pa_power (mW) |

Blue | Power Amplifier (PA) |

server_power_total |

Red | gNB Server |

ru_power_total |

Green | USRP (RU) |